tl;dr – we must do everything we can to ensure that generative AI doesn’t win.

Back in 2019 we had an idea. We wanted to gather together catalogue-related professionals at UK and US GLAM institutions to discuss the impacts of “legacy” catalogue entries on the quality of collection catalogues, on user experience, on collections-as-data, and on machine reuses of that data. Having spent time investigating the tenor and content of one major art catalogue, and having begun describing – in a paper that would be published the following year – how its circumstances of production had become detached from its usage as (digital) catalogue data, we wondered what happened when that data – and the conceptual/linguistic style of that data – was transmitted across time and between places. And so we planned a rapid programme of research, engagement, and partnership activities that would investigate that transmission, share and iterate our methods, and build sectoral capacity for approaching, understanding, and tackling legacy catalogue data. We put in a bid for a project called ‘Legacies of Catalogue Descriptions and Curatorial Voice: Opportunities for Digital Scholarship’ and we won the money. In February 2020 we got to work. And then the world changed.

The result was a 12-month project that instead lasted 36 months, finally finishing in February 2023 (my huge thanks to the AHRC for allowing us two no-cost extensions). Everything we had planned had to be reconsidered. All community events changed in form and focus. No archival research seemed possible. Budgets were refactored and rethought. And the very nature of our endeavour was under threat: how many GLAM institutions would care about dealing with quirks in their catalogue – or survive long enough to care – after the ruptures of lockdown?

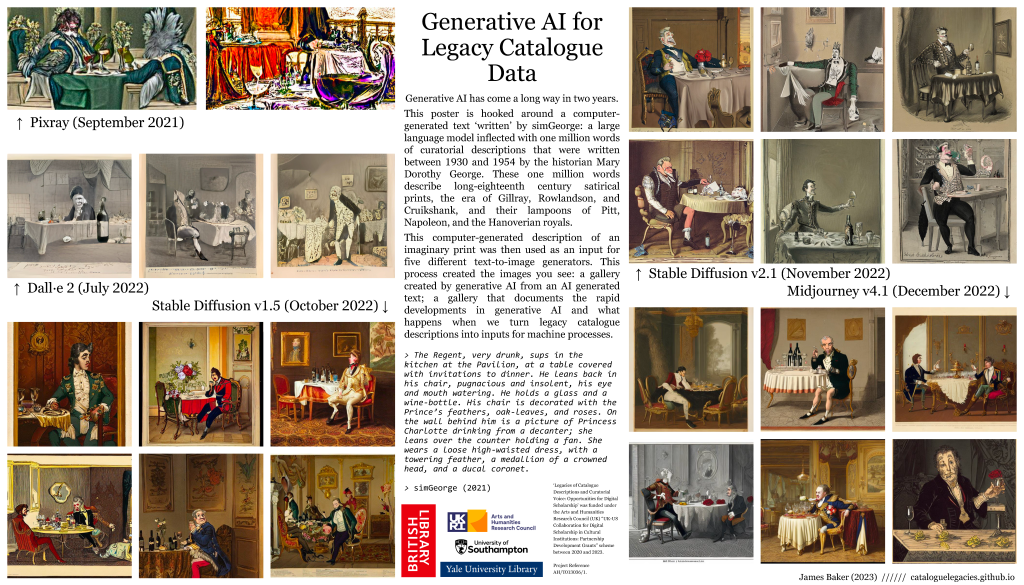

Thankfully, quite a few. Colleagues in the GLAM sector showed a great deal of interest in the project and we learnt a huge amount from their engagement with (and critiques of) our ideas. But it is the other big rupture that happened during the Legacies project that might well have a greater impact on our area of enquiry. This rupture is the explosion of generative AI (if you are unfamiliar with the concept, my colleague Sunil Manghani has written an excellent intro to the topic). We gestured at this in the bid, framed around needing to know more about the “legacy” descriptions that might form the basis of training machines to ‘read’ images. And throughout the project we tracked the remarkable and rapid developments in generative AI: whilst in January 2021 we could barely make a simulated cataloguer (trained on the language of another, Mary Dorothy George) hold together logic across multiple clauses and the images generated from those ‘sim’ descriptions were highly abstract, by the beginning of 2023 generative AI – even those using relatively small scale resources – could generate the look, feel, and style of the images that their source cataloguer described.

Why in the context of a project on legacy catalogue descriptions do I see generative AI as such a big rupture? Surely – some of you might be thinking – if I think our continued reliance on legacy descriptions for search and discovery is a problem (and with the greatest respect to figures like George, we do) then I might be interested in what generative AI can do to create new and updated descriptions? I understand that viewpoint, and I also understand that as someone with digital in their job title (for the third time!) I might be seen as an advocate of computational approaches to problems. The thing is, I’m not a techno-solutionist. And I see using generative AI in the context of cataloguing as techno-solutionism because investing in people, expertise, and labour would produce better outcomes (I’m slightly warmer on AI-assisted cataloguing, such as this workflow, but remain unsure). Indeed an aim of my work in this space, going right back to the ‘Curatorial Voice’ project, has always been to advocate for investment in cataloguing staff and their expertise, to highlight the problems of relying on decades old descriptions of collections (rather than investing in staff to update, refresh, revise those descriptions), and to create opportunities for GLAM professionals to develop skills that can help them articulate the scale and nature of these legacy voices in their catalogues, so that they are better able to make the case for investment in themselves and their work.

Midjourney v5’s “/describe” prompt command does do a good job of taking an image file as an input and creating a textual representation of what appears in the image file. And perhaps this part of image, art, and object cataloguing can benefit from this kind of assistance to create basic text for user discovery and access.

But describing what is there isn’t the valuable bit of cataloguing labour – representing things that appear is not seeing (and any seeing that is being done is anchored in infrastructures of white sight). [edit 11 April 2023: I realise that is a bit blunt, and that depending on the complexity of the object, describing what is there does of course have value; I guess what I was trying to get at is that IMO the more basic ‘say what you see’ logic of things like”/describe” aren’t the valuable bit of cataloguing labour] The valuable bit is telling the story of a satirical print, teasing out multiple layers of illusion, narrative, and social commentary. It is drawing in a range of historical and cultural contexts, the motivations behind the creation of an artwork, the spaces, movements, and vibes an artist was responding to, reacting to, railing against (but never, per Baxandall, influenced by). It is the rewriting of previous interpretations in previous catalogues, and the recognition that any act of cataloguing is provisional, that all printed catalogues are live objects, ripe for annotation from the moment they are printed (I’ve been working a little on the Judy Egerton archive at the Paul Mellon Centre for Studies in British Art, and her desk copies of her own exhibition catalogues – especially Wright of Derby (1990) and George Stubbs (1984) – are fascinating examples of this phenomena; added to which, Egerton was a delightful exponent of the art of passive aggressively dismantling a previous – apparently desperately wrong – interpretation, but I digress). It is the understanding of how cataloguing interacts and intersects with other forms of information retrieval systems and information retrieval systems at other institutions (recent archival research at Lewis Walpole Library during the final stages of Legacies underscored for me that cataloguing always takes place in a network of systems, that an old card catalogue, marginal notes on a print, and new MARC records on a database are often all intended to interact – even if that intention is not stated explicitly – rather than be fully useable in isolation). And it is undoing previous wrongs, centering cataloguing in the context of (often forcible) colonial collecting, the culture of the colonial museum, library, and archive, and the narratives told by colonizers about the importance the objects in their collections. Cataloguing, as Hannah Turner (magnificently) argues, is the product of culture. And culture in turn needs cataloguers. It needs people like Kathleen Lawther, and their call for more justice-oriented cataloguing labour that is more person-centered in its interpretation of collections and more explicit – at a meta cataloguing level – of who did what, why, and when during the process of cataloguing (see ‘People-Centered Cataloguing’ (2023)).

In their wonderful ‘The Crying Child: On Colonial Archives, Digitization, and Ethics of Care in the Cultural Commons’, Temi Odomusu writes:

Alongside others who share the discomfort of unmediated access to, and batch scanning of, cultural memory, I too turn my attention to further troubling images; revisiting those breaches (in trust) and colonial hauntings that follow photographed Afro-diasporic subjects from moment of capture, through archive, into code. (p. S290).

In turning over cataloguing to generative AI we risk repeating the errors of batch scanning cultural memory. We know – thanks to amazing work by people like Emily M. Bender, Timnit Gebru, Angelina McMillan-Major, Margaret Mitchell, and Abeba Birhane – that (as currently commonly deployed) generative AI tend to reproduce systems of oppression. The people who created and/or are represented in our collections deserve better than ‘say what you see’ machines batch generating descriptions of images such as those Odomusu discusses in “The Crying Child”, they deserve skilled professionals making judgements, teasing out illusions, and attempting to right wrongs. And even if they get things wrong – and researching the histories of cataloguing tends to suggest that cataloguers, as produces of temporally- and context- specific labour, do get things wrong – at least by investing in people we retain and grow a professional space with the tools to get it right.

A final thought. Throughout the Legacies project we worked with cataloguing professionals to understand their concerns, needs, and priorities in relation to “legacy” catalogue data. Last year we published an infographic (designed by Lucy Havens) that tried to capture those interactions.

In a world before everyone was talking about ChatGPT, generative AI featured prominently: cataloguers were ahead of the curve. As the Legacies project winds up and our research into cataloguing and catalogue data moves into its next phase, I look forward to continuing to work with colleagues in the GLAM sector on turning these priorities into action, to advocate for their expertise, and to push back against a tide of generative AI not built for us and our purposes. As Gebru, Bender, McMillan-Major, and Mitchell write: “we do not agree that our role is to adjust to the priorities of a few privileged individuals and what they decide to build and proliferate”. I fully concur.

Legacies of Catalogue Descriptions was a collaboration between the Southampton Digital Humanities, Sussex Humanities Lab, the British Library, and Yale University Library. The project run between February 2020 and February 2023. Huge thanks to my co-conspirators Rossitza, Cindy, and Andrew for putting up with me.

The project was funded under the Arts and Humanities Research Council (UK) “UK-US Collaboration for Digital Scholarship in Cultural Institutions: Partnership Development Grants” scheme. Project Reference AH/T013036/1. Huge thanks to the AHRC for their support and encouragement during an extremely challenging time to do transatlantic research and partnership building.

You must be logged in to post a comment.